I used to spend a chunk of my time pretending to be Winston Churchill on Twitter: @ukwarcabinet.

Quite a lot of paper (Scrap salvage campaign, 1942: Library of Congress LC-DIG-fsa-8c34784)

This was mostly a rewarding and interesting occupation for me, apart from occasionally having to write horrible things about Mahatma Gandhi. It taught me a huge amount about the day-to-day conduct of the Second World War, the minutiae of bomb attacks, how the press were handled, Commons debate, drafting the King’s speech and mobilising labour. The tweets were entirely comprised of extracts from Cabinet Papers and, in the first three years of the project, I read about 3,000 Cabinet Papers. These ranged from the fascinating cut and thrust of meetings in which the Cabinet contemplated, say, peace negotiations with Italy in 1940, to the consistently most tedious report, the weekly update on the country’s oil position. This is an invaluable dataset for the quantitative historian, and was an absolutely critical part of Britain’s war effort – but, for the tweeter panning for a soundbite, it’s drier than the ground the oil came out of.

Three thousand is a lot of Cabinet Papers. But the digitised collection covers the period from 1916 into the early 1990s – about 43,000 papers – so it’s less than 7% of the total. And wartime Cabinet Papers are not exactly representative of the Cabinet’s work throughout the majority of the 20th century.

In the summer, I had the chance to work with the History Lab at Columbia University. They have done a lot of work with a set of documents known as the Foreign Relations of the United States. FRUS is a little like English Historical Documents – big books of key documents selected by historians – except that FRUS has been published continuously from the 19th century to today (currently reaching into the 1980s) and consequently consists of over 450 volumes.

The History Lab have used FRUS, alongside other chunky but narrower corpuses such as transcripts of Henry Kissinger’s phone calls or Hillary Clinton’s emails. The team use a range of techniques to explore and describe these documents and the aim (among other experiments) was to use some of them to examine the Cabinet Papers.

One of the techniques I experimented with was topic modelling. Basically, we assume that documents are about things and that these things can be identified by looking at the words that comprise the documents.[ref]This is not necessarily true. For instance, ‘The Lion, The Witch and the Wardrobe’ by CS Lewis is about those things but it is also, in another way, about God, Jesus and Christianity. But none of those words appear in the text. So this method has its limits.[/ref] We identify groups of words which appear in close proximity to each other and then look at how these are distributed throughout the corpus. But probably you should read David Blei on this since he invented one of the most popular mathematical approaches for topic modelling. What we end up with are a range of topics we reckon a collection covers and an understanding of which documents are strong in which topics. For example, a text such as ‘Biggles Takes It Rough’ might, among a collection of other books, rate comparatively highly for topics like ‘aircraft’ and not very high for topics like ‘quantum physics’ or ‘toxic masculinity’.

In order to generate a set of 60 topics for the Cabinet Papers I used MALLET (the Machine Learning for Language Toolkit) and I am very grateful to Shawn Graham, Scott Weingart, and Ian Milligan for their indispensable getting started guide on the Programming Historian website. MALLET gathers the topics and calculates how strongly they are represented in each document.

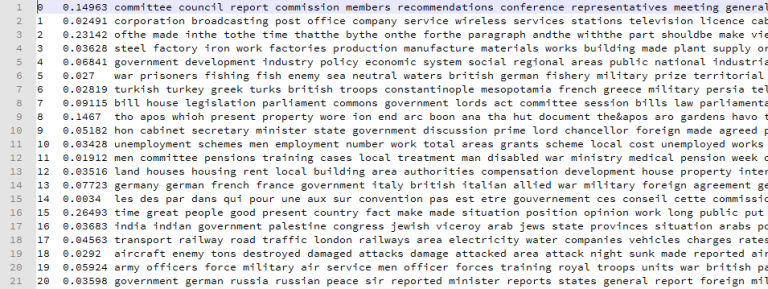

Some output from MALLET when fed with Cabinet Papers. Some of the terms comprising the first 20 topics can be seen

What MALLET doesn’t do is work out what these topics actually mean. But often this a relatively straightforward process. If we look at this image, in topic 1 we see words like ‘corporation’, ‘broadcasting’, ‘post office’, ‘wireless’, ‘television’, and so on. Clearly this topic is about communications and broadcasting. Once we have this topic it can guide us quickly to documents which are rich in this kind of content. Other topics identified included ‘Aviation and spaceflight’, ‘Naval warfare’, ‘India and the Middle East’, ‘East Asia’, ‘Health and Safety’, and so on.

The most interesting topics are those which seem to cluster material that could not be located via a keyword search. For example, topic 22 clustered together an unusual group of documents in which the Cabinet Secretary Sir Norman Brook appears to give his personal opinions on the items being discussed and the Ministers discussing them around the Cabinet Table:

‘The First Lord [Winston Churchill] took the conclusion very manfully and, to be candid, much better than I expected…[although he] was not to be denied his little bit of fun.’

‘This, of course, was a terrific bombshell. Everyone was amazed.’

These documents have a quite different vocabulary from the studiedly neutral language normally employed in Cabinet minutes and the algorithm is able to treat such documents differently from other papers.

However, this approach can also be haphazard. For instance, I have a topic which I called ‘law of the sea’ because it appeared to be comprised of documents discussing naval conflict in a legalistic context, encompassing fishing disputes or what is permissible after the sinking of a hospital ship. So what is this document doing in there? In the sense of the language employed there’s clearly a kind of ghastly similarity between a document about legal rights to shoot pheasants and a document about when it is appropriate to fire on vessels, but something has gone wrong here. Either the topic isn’t entirely what I thought it was or we need to reduce the threshold at which we regard documents as ‘belonging’ to particular topics.

The History Lab have published these results on their website, offering a new way to explore the Cabinet Papers – take a look. You can see the relative sizes of the topics: again, this allows us to make an assessment. Can ‘eyewitness accounts’ – a topic I identified because of the vivid language of the documents with the highest value – really be the largest topic? That doesn’t seem right. At any rate, by providing an interface, History Lab make it easy to begin the process of doing this better next time. Turning MALLET output into something explorable is not straightforward and my particular thanks goes to Rohan Shah for transforming my output into something that can be seen online.

If you fancy turning your hand to a bit of digital history, Megan Brett has written an excellent introduction to topic modelling in the digital humanities. These tools will be of more and more value in the future as we try to make sense of the huge quantities of data generated by contemporary political institutions: the Obama Presidential Library will contain over 300 million emails. Computers made this mess of data; they should help us clear it up.

TRANSFORMATION FROM GLORY TO GLORY